The Question That Killed Itself: The Halting Problem

The Setup: A Simple, Devastating Question

Imagine yourself grading lab submissions at 2 AM as a professor. The code of a student is running. Five minutes have passed. Do you wait or end the process? You want to look at it and see if there are any infinite loops and follow the logic. But what if the program had 10,000 lines? What if it needed information that you didn't have? What if you could write a universal checker that reads any code and tells you for sure if this will stop or if it will run forever? Well Turing proved that checker can't exist. Not because we haven't found it yet. Because the act of it existing creates a logical contradiction that collapses on itself.

The Proof: When Logic Eats Itself

Here is the proof, with all the extra parts taken out.

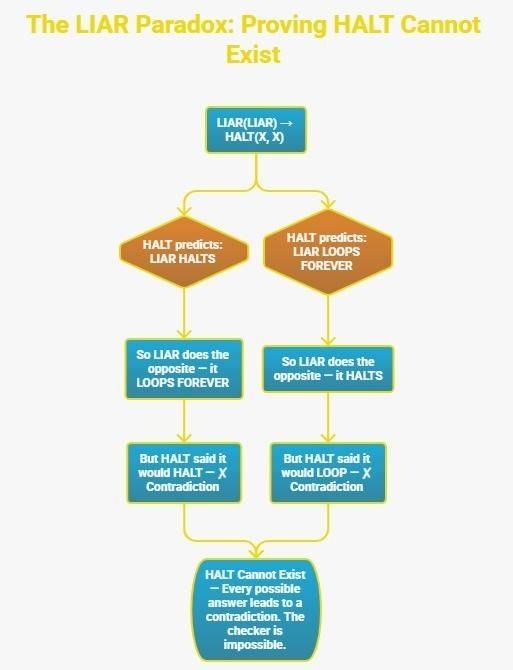

- Let's say, for the sake of argument, that our magical checker, which we'll call HALT(P, I), really does exist. If you give it a program P and an input I, it will always say "halts" or "loops".

- Now make a second program and name it LIAR(X). It only does one thing: it calls HALT(X, X) and gives the program its own input. Then it does the opposite of what HALT says it will do. LIAR loops if HALT says X will stop. If HALT says X loops, LIAR stops.

- Now, ask: What does LIAR(LIAR) do?

- If HALT tells LIAR to stop, LIAR loops. This is a contradiction. LIAR stops if HALT says LIAR will loop. There is confusion and thus no right answer. The checker breaks itself. In other words, HALT cannot exist.

This is a proof by contradiction, and it's essentially the same move Cantor used to show that some infinities are bigger than others. The 2023 CACM Viewpoint, "Using Computer Programs and Search Problems for Teaching Theory of Computation" says it best: "It is impossible to write a program that determines whether other programs will output a given answer on a given input". The Turing Machine formalism and the Python program formalism say the same thing but in different ways.

Why It's Bigger Than It Looks

This is where most ToC courses end. This is where it really gets interesting. It's not a strange edge case that the Halting Problem exists. It's the key to showing that other things can't happen. Researchers use a method called reduction. If you can show that solving Problem X would also let you solve the Halting Problem, then X is also undecidable. A lot of important questions fall into this category:

- Can we automatically check if two programs always do the same thing? Undecidable.

- Can we verify a program is free of all security vulnerabilities? Undecidable.

- Can we guarantee a compiler optimization never breaks correctness? Undecidable.

CACM's "Computing with Real Numbers, from Archimedes to Turing and Beyond" (September 2023) makes this clear: trying to figure out how simple math systems will behave in the long run can be as hard as trying to solve the undecidable Halting Problem. Your buggy for-loop and a disorganized physical system both have the same theoretical limit.

The Part Nobody Talks About: It's Also Practically Fine

In "Self-Reference and Section 230" (CACM, Vol. 61), CACM editor-in-chief Moshe Vardi put a philosophical spin on it: "The undecidability of program termination means that there is no algorithm that can correctly decide when all programs should end. What does that mean?” He is correct. Static analyzers, linters, and formal verifiers don't fix the Halting Problem; they fix limited versions of it. They say that they can guarantee termination for this type of program with certain limits. Java's bytecode verifier, Rust's borrow checker, and Coq's termination checker all work because they only look at a small number of things at a time. After Gödel showed that math can't prove all true things about itself, the mathematicians figured this out.

The Meta-Twist: Did Turing Even Prove It?

Here's a genuinely surprising footnote that most textbooks skip. "Did Turing prove the undecidability of the halting problem?" by Joel David Hamkins and Theodor Nenu, published in the Journal of Logic and Computation in 2024, says that Turing never used the term halting problem and that his machines weren't meant to stop at all. Turing's machines were made to run forever and make endless sequences. He actually showed that the printing problem, whether a machine ever prints a certain symbol was undecidable. The phrase "halting problem" and the way it is used today didn't come together until Martin Davis's 1958 textbook. So, the proof that broke computer science was not exactly the proof we think it was in a historical sense. Which makes sense, since the outcome is based entirely on self-reference and paradox.

Conclusion: Knowing What You Can't Know

The Halting Problem is not an engineering failure. It's a proof of limits, and limits that are well understood are very strong. When you use a type system, a memory-safe language, or a formal verification tool, you're using a system that picks a smaller, easier-to-answer question over a bigger, harder-to-answer one. That tradeoff, scope for certainty is one of the most important choices you can make when designing anything in computer science. Turing didn't just show that something couldn't be done. He was the first to say what the shape of the possible was.

References:

- Hamkins & Nenu, "Did Turing prove the undecidability of the halting problem?", Journal of Logic and Computation, 2024

- CACM Viewpoint: "Using Computer Programs and Search Problems for Teaching Theory of Computation", September 2023

- CACM: "Computing with Real Numbers, from Archimedes to Turing and Beyond", September 2023

- CACM: "Self-Reference and Section 230", Vol. 61, No. 11